One of the skills everyone needs to have is asking for help. Whether that’s in our work, our education, or our personal lives, we all need help from time to time. We are focused on work here, but this same basic rules apply in all aspects of our lives.

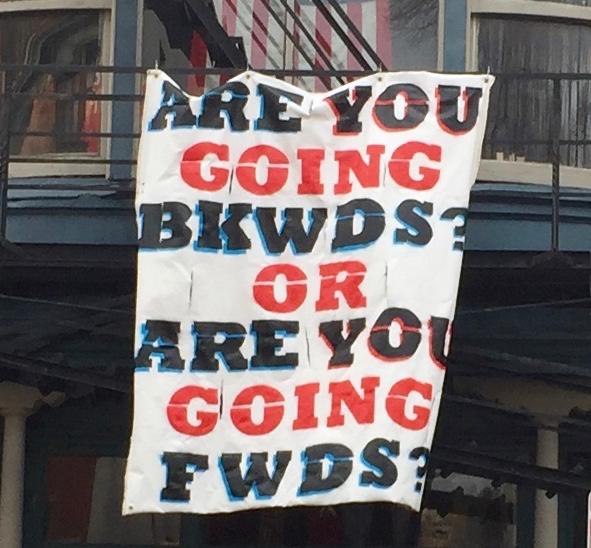

The right time to ask for help is, like so many things, a balancing act. Struggling through a complex issue can be a great way to learn something new. But often we can short cut that learning by simply asking the right questions at the right time.

On the flip side, if we ask too early not only do we risk missing a chance to build deep understanding, we also risk frustrating colleagues by asking them to do our job.

One short cut for when you need to ask for help is if another team member asks if you have already asked. Generally, I want to have called for support before my PM suggests it. By then they are frustrated that I haven’t already solved whatever the issue is solo.

Signs You Might Need Help

Given my current role and skill set, I’m often the person who gets called when a project goes sideways. That means I see a lot of places where someone didn’t call for help until they were in crisis. While that’s going to happen to us all from time to time, it’s better to call for help when the problem is small. If you want until the project starts burning down around you, it’s way too late.

You might need help if:

- You have absolutely no idea what to do next.

- You are about to re-design a large portion of your project to get around a challenge.

- You have spent more than a day pounding on a problem without success.

- You are avoiding working on a task, because you don’t know how to get started.

- You are about to use a mode/tool/technique that everyone says is a bad idea.

- In Salesforce that can mean things like:

- loading data in serial mode

- setting batch size to 1

- using view all data in your tests.

- In Drupal that can mean things like:

- hacking a module

- loading data in the theme layer

- writing raw SQL queries

- In Salesforce that can mean things like:

What to do Before Asking for Help

As I said before, asking for help is a balance: you can wait too long, or you can ask too soon. The real trick is hitting the sweet spot.

There are several things you should always do before taking another person’s time.

- Google It! I kinda can’t believe I have to say that, but not everyone bothers.

- Make sure you can explain the question clearly. If you don’t know where you got stuck, how can I help you get unstuck? And thinking it through might make the answer obvious.

- Develop a theory. When asking for help, it can be useful to pose a theory about an approach. Even if you’re wrong it may help me understand your thinking.

- Try a few things. Experimenting with what’s going wrong can help you formulate your question, and may help me short cut my research if you have eliminated obvious issues.

- Explain the problem to your dog, cat, rabbit, stuff animal, etc. As someone who spends time being a human rubber duck, I can often tell when someone tried to explain it once already.

Where/Who to Ask for Help

For me, the hardest part is knowing who to ask.

As a consultant I try to avoid asking questions in places clients may see it. Our clients pay for us to be experts, they do not want to see us asking questions in public – particularly if the question has a simple answer.

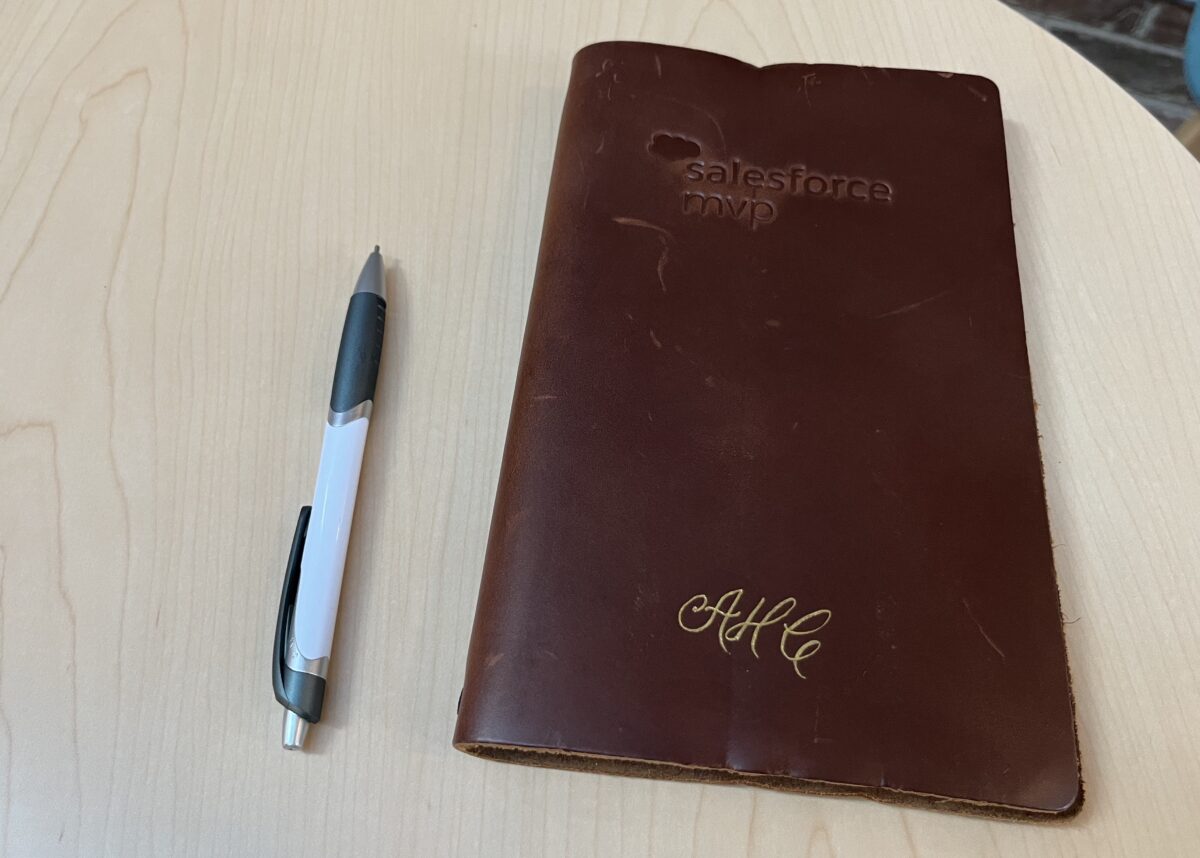

As a Salesforce MVP, one of my favorite perks is the MVP Slack channel, where we ask each other questions that run the full range of complexity. While access to a community that hard to access, and that advanced, is a privilege there are other ways to find similar groups like your local user group.

I love having a good internal network of people to ask for help. Most of the places I have worked at as a consultant have had some kind of information place to ask questions and help each other out. If you work in a consultancy find or create such a back channel.

If concerns about being seen by clients isn’t relevant to you, check out this list of 7 Salesforce Communities to Join recommended by Salesforce Ben.

Help Build a Helpful Community

The final thing to know about asking for help, is that it’s important to offer help as well. A good question can be valuable to someone else who has the same issue in the future. A good answer is helpful to both the person who asked the question and the person who looks again in the future.

But offering answers, even if not perfect answers, is a great way to learn and encourage others to seek help. Any time I post a question on Stack Exchange, I try to hunt around for one or two to answer as well. That both allows me to pay it forward, it also helps encourage the tone that people can be experts in one thing while still needing help in another.

Smart people need help, and should be comfortable asking for it.